HappyHorse 1.0 vs Kling 3.0: A Complete AI Video Comparison

The rapid evolution of AI video generation in 2026 has brought unprecedented attention to new models, especially HappyHorse 1.0. After topping multiple benchmarks, it has quickly become one of the most discussed AI video generators in the industry. At the same time, Kling 3.0 remains a strong competitor as a production-ready commercial tool. While both are powerful, they represent fundamentally different approaches to AI video creation, making a direct comparison essential.

Part 1. Why Is HappyHorse 1.0 Suddenly Trending in 2026?

HappyHorse 1.0 has rapidly become one of the most talked-about AI video models in 2026, and the main reason behind this surge is its unexpected performance on the Artificial Analysis Video Arena leaderboard.

According to publicly available benchmark data, HappyHorse 1.0 ranked #1 globally in both text-to-video and image-to-video categories, surpassing well-known models such as Kling 3.0 and Seedance 2.0 in blind human evaluations.

What makes this particularly notable is that the ranking is based on Elo scores derived from large-scale human preference voting, rather than synthetic metrics. This means the model is not just technically strong, but also performs better in perceived visual quality, prompt alignment, and realism.

Even more interesting is the way HappyHorse appeared: with no official release, no clear documentation, and limited public access, yet still managed to outperform established commercial systems. This combination of strong benchmark dominance and limited availability is precisely what has driven its rapid rise in attention.

Create Now!Part 2. What Do We Actually Know About HappyHorse 1.0?

Although public information is limited, several consistent patterns can be observed from benchmark reports and shared examples. HappyHorse 1.0 appears to focus heavily on semantic understanding and multimodal generation, allowing it to interpret complex prompts and translate them into structured visual outputs.

One of its most discussed capabilities is its ability to handle prompts that include multiple layers of information, such as subject interactions, environmental details, and cinematic elements like camera movement or lighting. In many showcased examples, the generated videos demonstrate a strong alignment with these instructions, which indicates a high level of prompt fidelity.

In addition, HappyHorse is often described as a multimodal system capable of generating both video and audio in a more integrated way. While detailed technical documentation is not publicly available, this design direction suggests an attempt to reduce fragmentation in the generation pipeline and improve synchronization between different media elements.

However, it is important to emphasize that most of these observations come from controlled benchmarks and curated outputs. Without broader public access, it is difficult to fully evaluate its stability, repeatability, and performance across diverse real-world scenarios.

Part 3. How Does Kling 3.0 Differ in Real Video Generation?

Kling 3.0 represents a more mature and application-oriented approach to AI video generation. Rather than focusing on achieving the highest possible benchmark scores, it emphasizes consistency, controllability, and temporal stability, which are critical for real-world usage.

From available demonstrations, Kling performs particularly well in maintaining character identity across frames, preserving object positioning, and ensuring smooth transitions between scenes. These capabilities are essential for longer videos or narrative-driven content, where even small inconsistencies can break immersion.

Another notable aspect of Kling is its relatively predictable behavior. Compared to models that prioritize peak output quality, Kling tends to deliver more stable and repeatable results. This makes it more suitable for workflows where users need to refine outputs iteratively or maintain visual continuity across multiple generations.

As a result, the comparison between HappyHorse and Kling is not simply about which model is "better," but rather about which type of performance is more relevant for different use cases.

Part 4. Prompt Performance: Direct Understanding vs Controlled Generation

Prompt handling is one of the most critical aspects of AI video generation, and it is also where the distinction between these two models becomes particularly clear.

HappyHorse 1.0 appears to rely on direct semantic interpretation, meaning that it can process complex, descriptive prompts and generate outputs that closely match the intended scene in a single attempt. This includes handling multiple entities, dynamic actions, and stylistic instructions without requiring extensive refinement.

Kling 3.0, on the other hand, behaves more like a guided generation system. While it supports prompt-based input, achieving optimal results often involves iterative adjustments and structured guidance. This makes it less dependent on a single prompt but more reliant on user control throughout the process.

From a practical perspective, this difference reflects two distinct philosophies. HappyHorse prioritizes immediate alignment between input and output, while Kling prioritizes flexibility and control over the generation process.

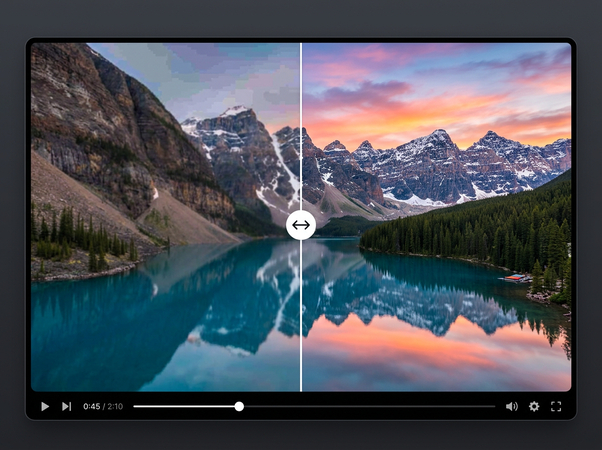

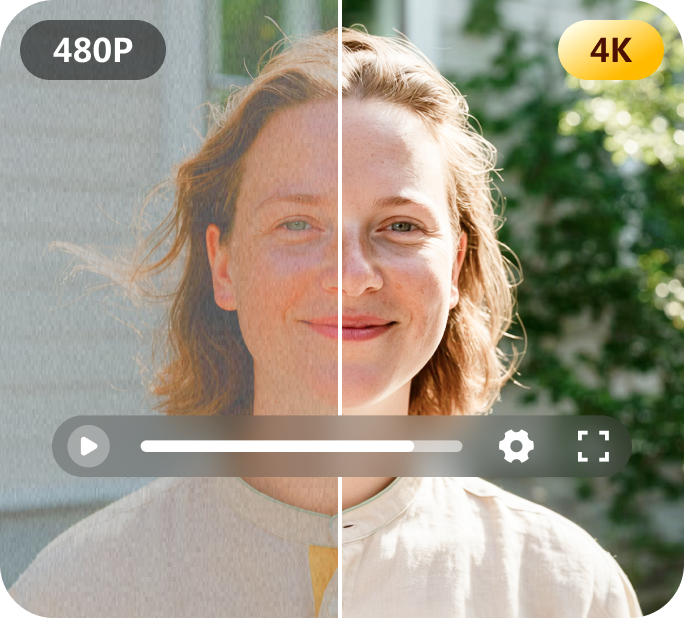

Part 5. Video Quality Comparison: Peak Output vs Stable Results

When comparing visual quality, HappyHorse 1.0 is often associated with more striking and detailed outputs, especially in short-form videos. Its ability to accurately reflect prompt details contributes to a higher perceived level of realism in individual generations.

However, this strength is closely tied to benchmark scenarios and curated examples. Without extensive real-world testing, it is difficult to determine how consistently this level of quality can be maintained across repeated generations.

Kling 3.0 takes a different approach by focusing on consistency over peak performance. While its outputs may appear slightly less detailed in some cases, they are generally more stable across multiple runs. This consistency is particularly valuable in production environments, where reliability is often more important than achieving the highest possible quality in a single attempt.

Part 6. Motion and Consistency: Short Clips vs Long Video Performance

Motion representation further highlights the difference between the two models. HappyHorse demonstrates strong performance in short, dynamic sequences, where it can generate fluid motion and maintain coherence within a limited timeframe.

Kling 3.0, however, is better suited for longer video generation. It excels at maintaining continuity over time, ensuring that characters, objects, and environments remain consistent throughout extended sequences. This makes it more appropriate for storytelling, sequential content, and multi-shot compositions.

This distinction reinforces the idea that HappyHorse is optimized for high-impact outputs, while Kling is optimized for sustained performance.

Part 7. The Real Gap: Advanced Models vs Everyday Usability

Despite their impressive capabilities, both HappyHorse 1.0 and Kling 3.0 highlight a significant gap between cutting-edge research and everyday usability.

HappyHorse remains largely inaccessible, with no official release or public interface. Kling, while more available, often requires a more structured and potentially time-consuming workflow to achieve optimal results. For many users, especially those without technical experience, these limitations can reduce practical usability.

This creates a clear divide between what these models can achieve in theory and what users can realistically accomplish in practice.

Part 8. A More Practical Choice: Simplifying AI Video Creation

For users who prioritize efficiency and accessibility, simpler tools can often provide more immediate value. Solutions like HitPaw Online AI Video Generator focus on reducing complexity and making video creation more approachable.

Instead of requiring detailed prompt engineering or iterative refinement, it streamlines the process of converting text into video. This makes it particularly useful for content creators, marketers, and everyday users who need quick results without dealing with experimental systems.

While it may not aim to compete with top benchmark models in raw performance, it addresses a different and equally important need: making AI video generation usable in real-world scenarios.

Final Thoughts

HappyHorse 1.0 and Kling 3.0 demonstrate how rapidly AI video technology is evolving, but they also show that accessibility remains a challenge. For most users, the goal is not to test the limits of AI models, but to create content efficiently. If ease of use and speed matter more in your workflow, tools like HitPaw Online AI Video Generator offer a more practical way to bring ideas to life.

Generate Now!

Home > Learn > HappyHorse 1.0 vs Kling 3.0: A Complete AI Video Comparison

Select the product rating:

Natalie Carter

Editor-in-Chief

My goal is to make technology feel less intimidating and more empowering. I believe digital creativity should be accessible to everyone, and I'm passionate about turning complex tools into clear, actionable guidance.

View all ArticlesLeave a Comment

Create your review for HitPaw articles