HappyHorse-1.0 vs Seedance 2.0: Is the New Seedance Killer Worth the Hype?

As an AI tool reviewer, I've tested dozens of video generation models-but few have shifted the landscape as fast as HappyHorse-1.0 and Seedance 2.0. The space is no longer experimental; it's a full-blown competition for dominance. Seedance 2.0 set the benchmark with its multimodal power, but now HappyHorse-1.0 is making bold claims as a "Seedance killer." After hands-on testing and benchmark analysis, one question stands out: Is HappyHorse-1.0 truly better, or just hype?

Try HappyHorse AI!Part 1: What Are HappyHorse-1.0 and Seedance 2.0?

From my testing experience, HappyHorse-1.0 and Seedance 2.0 represent two very different directions in AI video generation.

HappyHorse-1.0 Overview

HappyHorse-1.0 feels like a model built for creators who care about visual quality above all else. In my tests, it consistently delivered more cinematic outputs with smoother motion and fewer artifacts compared to most competitors. It supports both text-to-video and image-to-video workflows, but what really stands out is its temporal consistency-videos look stable, with minimal "AI jitter".

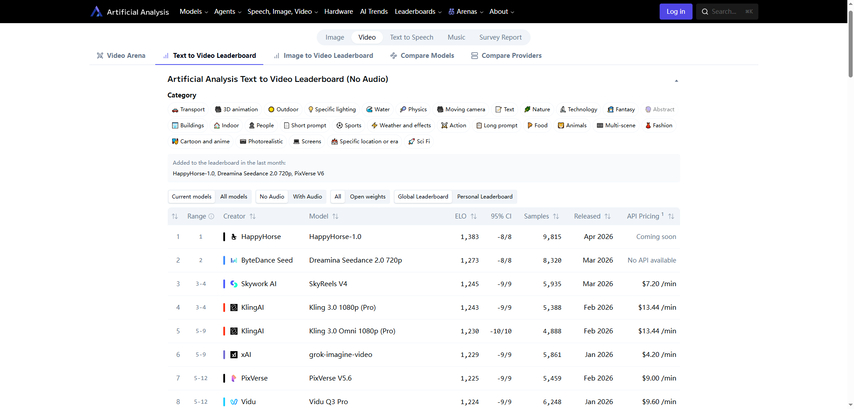

Key strengths I observed include strong character consistency (faces and identities don't drift easily) and a noticeably faster rendering pipeline. Benchmark-wise, HappyHorse-1.0 has been ranked at the top of Elo-based evaluations like AI Video Arena, which aligns with my subjective experience: it simply looks better in many cases.

Seedance 2.0 Overview

Seedance 2.0, on the other hand, is a feature powerhouse. Developed with a clear focus on multimodal generation, it supports text, images, video inputs-and even native audio generation. This makes it far more flexible for complex projects.

In practice, I found Seedance excels at camera control and multi-shot composition. You can generate sequences that feel closer to real film production, with pans, zooms, and scene transitions. It's also one of the most widely discussed AI video models, largely because of its realism and versatility.

Try Seedance 2.0Who Built HappyHorse-1.0? (Speculative Insight)

One of the reasons HappyHorse-1.0 has generated so much buzz is precisely because of its mysterious origin. The less clarity there is, the more curiosity it sparks-and naturally, the market has started forming its own theories.

The most widely circulated speculation points to a connection with the Future Life Laboratory under Taotian Group (Alibaba ecosystem), potentially led by Zhang Di. Based on publicly available background information, Zhang Di previously played a key role in the Kling AI video project at Kuaishou, and before that, he was responsible for large-scale data and machine learning infrastructure at Alibaba's advertising division.

This theory has gained traction across industry discussions and self-media reports, mainly because the technical direction of HappyHorse-1.0-particularly its strength in motion realism and large-scale modeling-resembles capabilities seen in top-tier Chinese AI labs.

However, it's important to clarify from a professional evaluation standpoint: there is currently no official confirmation or authoritative source verifying this claim.

Part 2: HappyHorse-1.0 vs Seedance 2.0 (Full Comparison Table)

After testing both models and reviewing benchmark data, the gap between HappyHorse-1.0 and Seedance 2.0 becomes much clearer when you break it down across core dimensions. Instead of a simple "which is better," this comparison shows where each model actually leads.

| Category | HappyHorse-1.0 | Seedance 2.0 |

|---|---|---|

| T2V Quality (No Audio) | Ranked #1 (~1330+ Elo), strongest visual consistency | Ranked #2 (~1270+ Elo), slightly behind in motion stability |

| T2V Quality (With Audio) | Slightly lower ranking, audio less mature | Top-tier performance with better audio-video sync |

| I2V Quality (No Audio) | Leading performance (~1390+ Elo), excellent prompt alignment | Very strong (~1350+ Elo), but slightly less stable |

| I2V Quality (With Audio) | Competitive but not leading | Marginally ahead with tighter synchronization |

| Audio Generation | Available, but not core strength | Advanced native audio with dual-branch synchronization |

| Model Architecture | Single-stream large Transformer (focus on unified generation) | Dual-branch diffusion + Transformer hybrid (video + audio separation) |

| Motion & Physics | More natural, less jitter, stronger temporal coherence | Highly realistic, but occasional instability in complex scenes |

| Prompt Adherence | Very strong, especially for cinematic prompts | Strong, but depends on multimodal inputs |

| Known Provider | Not officially disclosed (speculated background) | Developed by ByteDance |

| Open Weights | Claimed to be upcoming (not yet fully available) | Not open-source |

| API Availability | No public API yet | Limited access (consumer tools available, official API restricted) |

| Access Channels | Primarily demo/web access | Available via platforms like Dreamina and CapCut (region-limited) |

| Ease of Use | Simpler, more output-focused | More complex, but highly flexible |

| Best Use Case | High-quality cinematic clips, ads, visual storytelling | Advanced workflows, filmmaking, multimodal projects |

Key Takeaways from the Comparison

From a reviewer's perspective, the difference is very clear:

- HappyHorse-1.0 leads in visual quality benchmarks

- Seedance 2.0 leads in multimodal capability

- Architecture explains the gap

→ Especially in text-to-video and image-to-video without audio, where motion consistency and realism matter most

→ Particularly when audio, camera control, and complex scene composition are involved

→ HappyHorse's unified Transformer favors consistency

→ Seedance's dual-branch system favors flexibility and synchronization

Part 3: HappyHorse-1.0 Advantages

From my hands-on testing and benchmark analysis, HappyHorse-1.0 stands out as a quality-first model that prioritizes visual excellence over feature complexity.

- Best-in-class motion realism

- Top benchmark performance (especially without audio)

- Faster production workflow

- Multilanguage support

The most noticeable advantage is its temporal consistency. Movements feel natural, with significantly reduced flickering, warping, or "AI jitter"-a common issue in many video models.

In Elo-based evaluations (such as AI Video Arena), HappyHorse consistently ranks #1 in text-to-video and image-to-video tasks without audio, indicating strong human preference for its visual outputs.

Compared to more complex multimodal systems, HappyHorse generates results faster and requires fewer adjustments-making it efficient for rapid content creation.

It handles lip-syncs across multiple languages effectively, which is valuable for global creators and marketing teams.

Best for:

- Advertising creatives

- Cinematic storytelling

- Product showcase videos

Part 4: Seedance 2.0 Advantages

In contrast, Seedance 2.0 is built as a multimodal creative system, offering more control and flexibility rather than pure visual dominance.

- Native audio + video generation

- Strong multimodal capabilities

- Advanced camera control

- Flexible creative workflows

- Public entry via ecosystem tools

One of its biggest differentiators is built-in audio-video synchronization, enabling generation of scenes with dialogue, sound effects, or ambient audio.

Seedance supports text, image, video, and audio inputs, allowing users to guide outputs with much higher precision.

It offers cinematic controls such as pan, zoom, tracking, and multi-shot composition, making outputs feel closer to real film production.

Seedance behaves more like an AI directing tool, suitable for building structured narratives rather than single clips.

While not fully open, it is accessible through platforms like Dreamina and CapCut (region-dependent), giving users a practical way to experiment.

Best for:

- Filmmakers and advanced creators

- Multi-scene storytelling

- Complex, structured video projects

Part 5: Limitations of HappyHorse-1.0 and Seedance 2.0

Even though both models are cutting-edge, neither is without trade-offs.

HappyHorse-1.0 Limitations

- Limited ecosystem transparency

- Weaker multimodal capabilities

- Early-stage platform

The team and roadmap are still unclear, which raises questions about long-term support and stability.

Compared to Seedance, it offers less control over audio, camera movement, and complex scene composition.

Access is still limited (mostly demo-based), with no widely available API or mature ecosystem yet.

Seedance 2.0 Limitations

- Limited global availability

- Higher complexity

- Potential content restrictions

Access is largely restricted to specific regions or platforms, which can be a barrier for international users.

Its powerful features come with a steeper learning curve, making it less beginner-friendly.

Like many large-scale AI systems, it may enforce stricter moderation, limiting certain creative use cases.

Part 6: HappyHorse-1.0 vs Seedance 2.0: Future of AI Video Models

From both industry reports and my own testing, AI video generation is clearly moving from "impressive demos" to production-level infrastructure. In 2026, the biggest shift is that these models are no longer isolated tools-they're becoming end-to-end creative systems.

First, multimodal convergence is accelerating. Leading models are now capable of generating video, audio, and even narrative structure in a single pipeline, reducing the need for separate tools. This is exactly where models like Seedance are heading-and where competitors like HappyHorse will likely evolve next.

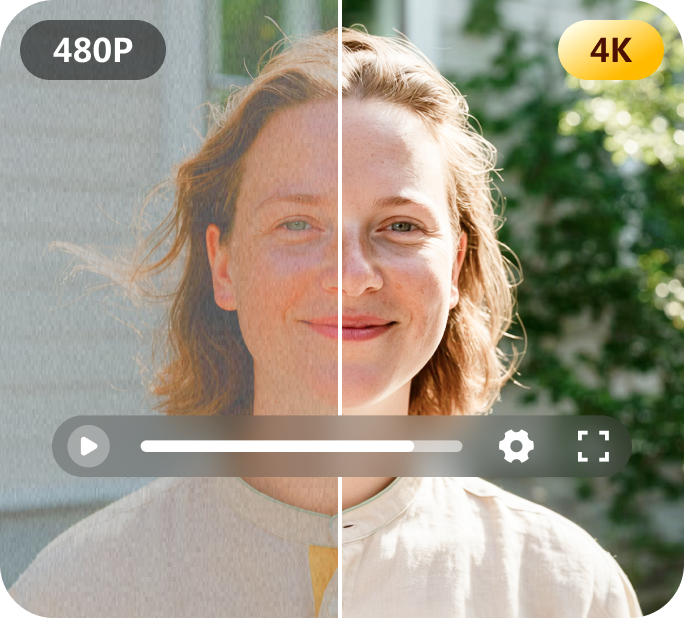

Second, quality is approaching real-world production standards. We're seeing native 4K outputs, longer clips, and far better physics simulation, with AI-generated videos becoming usable in commercial workflows. In some cases, AI has already reduced production time from days to minutes.

Third, real-time and interactive video generation is emerging. Research shows that new architectures are reducing latency dramatically, pushing toward live generation and interactive AI-driven scenes rather than pre-rendered clips.

Finally, the industry is shifting toward AI filmmaking engines-systems that handle scripting, generation, editing, and distribution in one place. Adoption is accelerating fast, with over 40% of enterprise creative teams already integrating AI video into workflows.

My take: the next generation of models won't compete on just "quality vs control"-they'll combine both, turning AI video from a tool into a core creative platform.

Bonus Tip - Try Multiple AI Video Models in One Tool

If you don't want to be locked into a single model, using a platform that aggregates multiple AI video engines is a much smarter workflow-especially in such a fast-evolving space.

From my experience, HitPaw Online AI Video Generator is a practical option because it lets you test and compare different models in one place without switching tools. Instead of guessing which model works best, you can quickly iterate and see results side by side.

Create Now!

Why it's recommended:

- Access to multiple leading models (including Seedance, Veo, Kling and other emerging engines)

- No watermark, making outputs usable for real projects

- Supports both text-to-video and image-to-video workflows

- Clean, beginner-friendly interface with low learning curve

Best for:

- Fast experimentation across different models

- Content creators testing styles and formats

- Marketers who need quick, scalable video production

FAQs about HappyHorse-1.0 vs Seedance 2.0

Q1. Is HappyHorse-1.0 better than Seedance 2.0?

A1. In the HappyHorse-1.0 vs Seedance 2.0 comparison, neither is universally better-it depends on your use case. HappyHorse-1.0 generally leads in visual quality, motion realism, and benchmark rankings (especially without audio), while Seedance 2.0 excels in multimodal generation, audio-video synchronization, and creative control. For cinematic visuals, HappyHorse often wins; for complex workflows, Seedance is more powerful.

Q2. Can I access HappyHorse-1.0 via API today?

A2. As of now, HappyHorse-1.0 does not offer a publicly available API. Most users can only access it through demo platforms or limited web interfaces. There are indications that API or open-weight releases may come in the future, but no official timeline has been confirmed. For developers, this means it is currently not production-ready for integration, unlike more mature ecosystems.

Q3. What hardware is required to run HappyHorse-1.0?

A3. Running HappyHorse-1.0 locally would require high-end hardware, typically multi-GPU setups (e.g., A100/H100-level GPUs) due to its large-scale video generation architecture. However, since it is not fully open-source yet, most users interact with it via cloud or demo access. In practice, you don't need local hardware unless full model weights are released, which lowers the barrier for general users.

Q4. What is the pricing of HappyHorse-1.0 vs Seedance 2.0?

A4. Pricing for HappyHorse-1.0 vs Seedance 2.0 differs in transparency and structure. Based on official HappyHorse pricing, the Starter Pack ($12.90 for 480 credits) works out to about $0.27 per video, while the Ultra Pack ($128.90 for 8700 credits) drops the cost to roughly $0.15 per video, making it more cost-efficient at scale. In comparison, Seedance 2.0 typically follows a subscription or usage-based pricing model, with estimated costs ranging from $0.10 to $0.80 per generated minute, depending on quality and platform access.

Conclusion

From my evaluation, the positioning is clear: HappyHorse-1.0 leads in realism and visual quality, while Seedance 2.0 dominates in control and multimodal flexibility. There's no absolute winner-only the right tool for your workflow. If current trends continue, the next generation of AI video models will likely merge both strengths, delivering cinematic quality and full creative control in a single system.

Create Now!

Home > Learn > HappyHorse-1.0 vs Seedance 2.0: Is the New Seedance Killer Worth the Hype?

Select the product rating:

Natalie Carter

Editor-in-Chief

My goal is to make technology feel less intimidating and more empowering. I believe digital creativity should be accessible to everyone, and I'm passionate about turning complex tools into clear, actionable guidance.

View all ArticlesLeave a Comment

Create your review for HitPaw articles