What Runway ML AI Video Generator Offers? A Detailed Guide with Features

Runway ML AI video generator has already gained traction, and with the release of its latest model, it has become the talk of the town. In this guide, you'll explore its capabilities, the core features, and the pricing plans to see if you can use it for your projects. Let's begin!

Create Now!Part 1. What Runway ML AI Video Generator Is All About?

Runway ML AI video generator is a web-based platform that you can use to create and edit video content.

The technology behind Runway's video generation has evolved significantly over time. After Gen 3, when Gen 4 was introduced, the biggest improvement was consistency. Earlier Runway AI video tools often changed faces, objects, or backgrounds from one frame to the next. Gen 4 was designed to keep characters, environments, and motion stable throughout a clip. Runway explains that Gen 4 focuses on maintaining subject consistency and visual coherence when generating video from text and images (Runway Research, Introducing Gen 4, runwayml.com).

Another improvement in Gen 4 was better control over references. Users could upload an image and guide how the video should look, instead of relying only on text. This made it easier to keep a certain style, character design, or mood across scenes.

After that, Runway released Gen 4 Turbo. The purpose of Turbo was speed. It was built to generate videos faster and use fewer credits compared to the standard Gen 4 model while still keeping strong visual quality. This made it useful for creators who need to test ideas quickly or produce short clips for social media.

The newest version, Gen 4.5, improved realism and prompt accuracy. It produces more natural motion, better lighting behavior, and stronger alignment to follow your description more closely, which makes movement look less artificial.

Independent reports, like in Times of AI, have also noted that Gen-4.5 performs strongly compared to other AI video systems, especially in motion smoothness and scene quality.

That progression is what turned Runway from an experimental AI tool into one of the most widely used AI video generation platforms today.

Part 2. Key Features of Runway ML AI Video Generator

Runway ML AI video generator comes packed with features that are very helpful to creators in various niches:

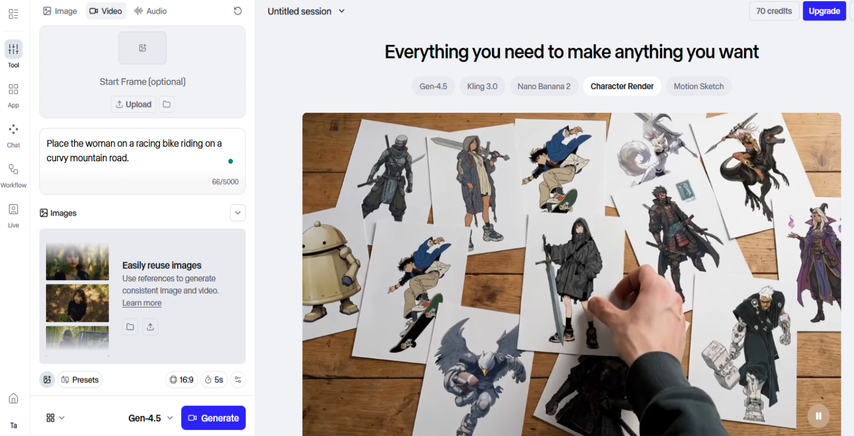

1. Text to Video Generation

Runway ML AI video generator has the text-to-video feature, and it does exactly what it sounds like. You enter a prompt it turns that into a short video.

Runway gives you the latest model called Gen-4.5. In Gen-4.5, you don't have to upload an image to get started. It's not compulsory.

Now here's where it gets even more interesting.

Runway doesn't just stop at one model. Inside the platform, you also get access to other text-to-video models like:

- Kling 2.6 Pro

- Veo 3

- Sora 2

- Sora 2 Pro

- WAN 2.6 Flash

So you're not locked into one engine. You can switch depending on what kind of result you want. It works more like a creative studio than a single tool.

And then we get to the presets. This is where things start becoming more controlled instead of random.

There are three preset categories:

- Camera

- Movement

- Action

Under each of these categories, you can find different presets that help guide the tool according to what you want in your video. So instead of writing one long, complicated paragraph, you can use these presets to shape the outcome. It becomes more intentional.

Then you choose your aspect ratio. So if you're making something for YouTube, you can go widescreen. If it's for Instagram or TikTok, you switch to vertical. There's flexibility here.

You can also choose the video duration, which ranges between 2 and 10 seconds. That gives you control over how long your generated clip will be.

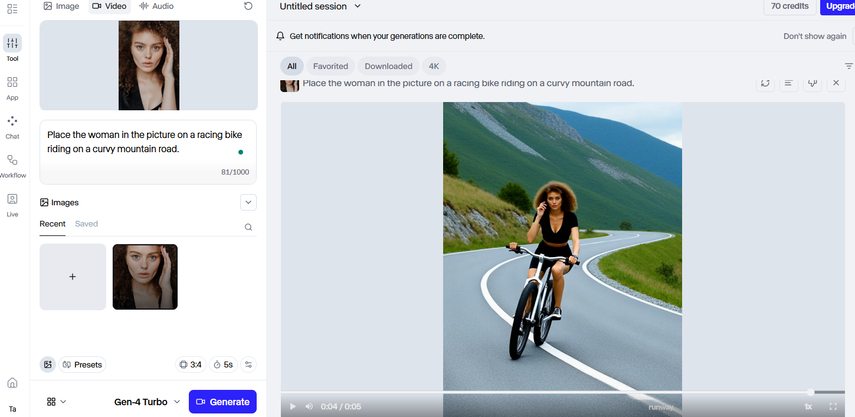

2. Image to Video Animation

So in Image to Video, Runway provides its own native models, which are Gen 4, Gen 4 Turbo, and Gen-4.5. You can select any one of them depending on your requirement and start building your video from an image instead of plain text.

In Gen 4 and Gen 4 Turbo, you need to upload a start frame first. After that, write a prompt explaining what should happen to that image, and the Runway ML AI video generator generates the video accordingly. The uploaded image acts as the base, and your prompt guides the transformation.

As discussed above, the start frame is optional in Gen-4.5.

Other than Runway's native models, there are additional image-to-video models available under Kling, Google, OpenAI, and WAN. These models allow you to upload not just a start frame, but also an end frame. That means you can define how the video begins and how it finishes, and the AI generates the transition between them.

The basic presets remain the same here. You still get Camera, Movement, and Action categories to guide the direction of the output. You can also select the aspect ratio depending on where the video will be used, just like in text-to-video.

There is also an option that allows you to reuse images to generate consistent videos. This helps maintain character consistency across different clips. Under this option, you can generate consistent characters with natural lighting, moderate quality, and natural subject expression.

You also get access to a text prompt guide that helps you understand the kind of prompt you can write to achieve the desired outcome.

Overall, the results here are strong and practical. If someone wants more control over visuals by starting with an image, this feature gives that structure while still allowing creative direction through prompts and presets.

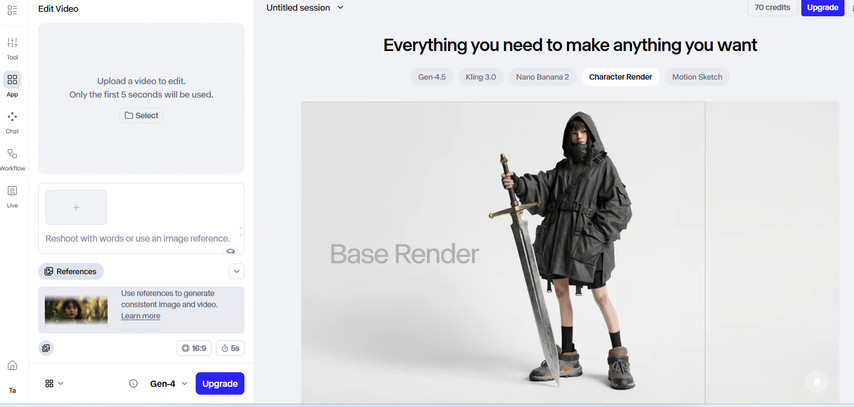

3. Video to Video with Gen-4 Aleph

Runway ML AI video generator also offers video-to-video generation. It is not shown as a separate big button on the main dashboard, so at first glance, you might not notice it. The feature is actually embedded inside the Apps section.

When you go into Apps and select Edit Video, the Gen-4 Aleph model is automatically selected. Aleph is the model responsible for video editing with text prompts. Instead of generating something from scratch, you can modify an existing video.

There is one important restriction here. You can upload a video up to five seconds long. If your video is longer than five seconds, you need to trim or cut it first and then upload the specific five-second section that you want to edit.

Once uploaded, you can write a text prompt explaining what changes you want in that clip. The model reads your prompt and edits the video accordingly. You are basically directing the transformation through text.

You also get the option to use an image reference along with your prompt. That image acts as visual guidance for the edit, helping the Runway ML AI video generator understand the style or elements you want applied to the video.

So if someone already has a clip and wants to change its look, atmosphere, or subject details using text instructions, Gen-4 Aleph handles that process directly inside the Edit Video section.

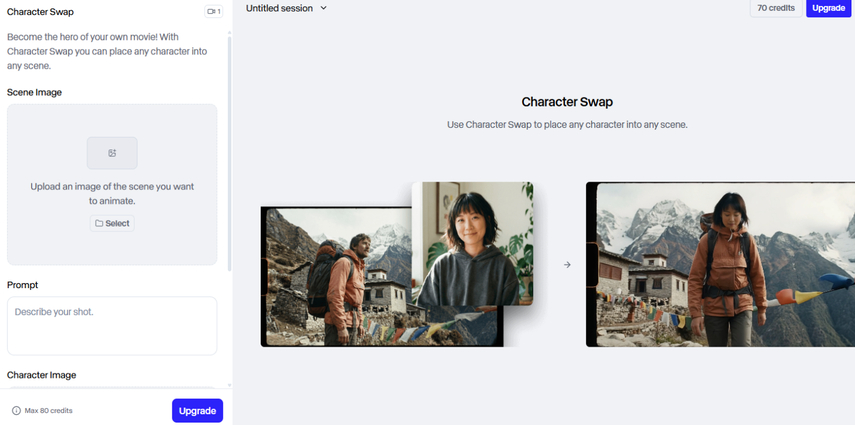

4. Video Editing & Enhancement

Runway is not limited to video creation. Once you generate a video, or even if you upload your own, you get access to additional tools that help you modify and improve it. It works more like a complete creative suite rather than a single-purpose generator.

One of the features allows you to swap a character in a video. If you want to replace one subject with another, you can do that without rebuilding the entire scene. The system adjusts the video based on your changes.

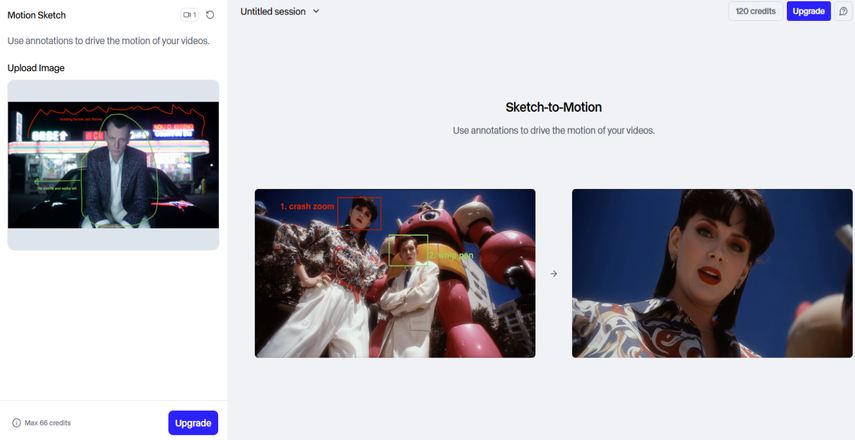

There is also a Motion Sketch option. This allows you to draw movement directions directly onto the frame, guiding how elements in the video should move. Instead of relying only on prompts, you visually indicate motion paths.

Then there is Act-Two. In Act-Two, you can animate characters by transforming a human actor's performance from a video onto a reference image or video. It supports full body, head, face, and hand tracking. This allows for highly realistic character animation, including lip syncing and emotional expression. The limitation for this feature is 30 seconds.

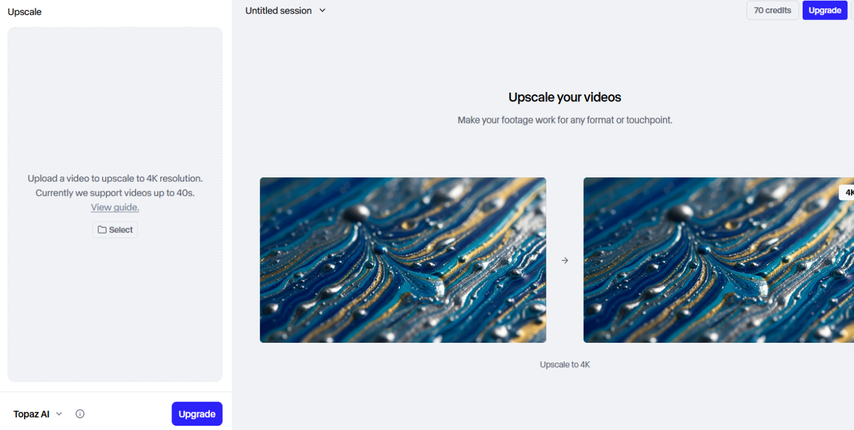

After your video is ready, you are not stuck with the original resolution. Runway gives you the option to upscale your video up to 4K.

That means you can enhance the final output for higher-quality playback without recreating the project.

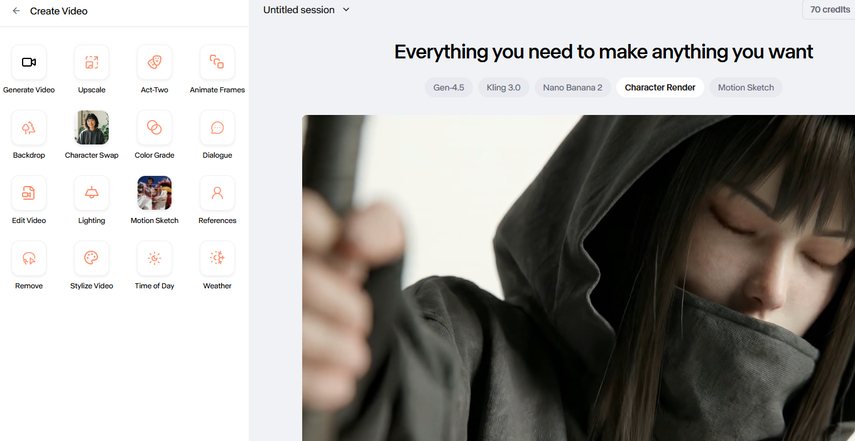

5. Creative Video Tools in Apps Section

The Apps section in the Runway ML AI video generator is packed with tools and different models that support your creative process. Here, you get access to models like Nano Banana 2 and Seedream 5.0, which you can use to first generate images and then turn those images into videos. So instead of starting directly with motion, you can build your visual foundation first.

Inside the Create Video area, you get a wide range of tools beyond just upscale, Act-Two, and Animate Frames. You can experiment with lighting adjustments, remove the background, stylize the video, and even change the time of day and weather conditions.

There is also an option where you can upload an image of a character and type a dialogue in the prompt box. The Runway AI then generates a video where the character speaks according to your input. This helps when you want to create character-driven clips without recording new footage.

Another feature is the Backdrop option. You can upload a video here, but only the first five seconds will be used. After uploading, you can describe the background and apply a preset to modify how it appears in the preview.

You also get access to a Color Grade option. This allows you to change the overall tone of the video using different presets. For example, you can apply Golden Hour, Nordic, Nordic Noir, Horror Green, and other styles to shift the visual mood of your clip.

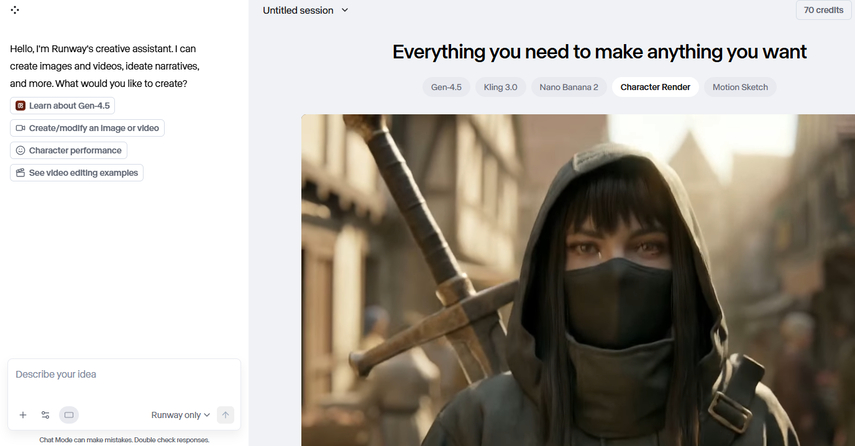

6. Chat Mode and Conversational Prompting

In Chat Mode of the Runway ML AI video generator, you talk to the AI and tell it what you want to create. It can help you generate videos, build narratives, structure ideas, and guide the overall direction of your project. You can also treat it like a video-to-video editor by uploading footage and instructing it on what to modify.

You can add media directly inside Chat. That media can be something you already created in Runway or a file uploaded from your computer. The assistant then uses that media as context while following your instructions.

For example, if you upload a video and tell the assistant to enhance fidelity or fix artifacts, it will process that request and apply the necessary adjustments.

Aspect ratio options are available here as well. You can choose 16:9, 1:1, or 9:16. However, there is a possibility to adjust these ratios based on what you want to create, so you are not locked into a single format.

There is also model selection inside Chat Mode. You can choose to use Runway's native models or select custom models for certain tasks. However, for video generation specifically, the model is restricted to Runway Gen-4.5 here.

Prompting plays a major role here. You can describe camera controls, shot sequences, transitions, timestamps, and detailed scene instructions. A practical approach is to first ask the Chat assistant to expand your basic idea into a structured prompt. It can generate a complete, organized instruction set for you, which you can then use to produce the final video.

Part 3. How Much Does Runway AI Video Cost?

Runway ML AI video generator offers a free version, and with that, you get 125 credits. These credits are mainly there to give you an idea of how the platform works, and are actually not enough to generate anything meaningful. You will instantly get the message to subscribe to the Pro plan.

The cost of the Pro subscription is $35 per user per month. If you switch to yearly billing, the cost drops to $28 per user per month, but it is billed annually at $336. This plan unlocks access to all models, including the latest video models, along with the available tools and upcoming features.

In Pro, you can remove watermarks, upscale videos, and experience shorter wait times during video generation. The monthly credit allocation ranges between 2250 credits. In practical terms, you get 187 seconds of Gen-4.5, 456 seconds of Gen-4 Turbo, 112 2K Nano Banana Pro images, 56 4K images, or around 187 seconds of Gen-4.

One important advantage of the Pro plan is flexibility. If you run out of credits, you are not stuck waiting for the next cycle. You can always buy more credits whenever you need to generate more content.

Part 4. FAQs of Runway ML AI Video Generator

Q1. Is the Runway ML AI video generator free?

A1. Although Runway provides a free tier with 125 credits to explore the platform, you need to subscribe to the Pro plan to generate videos reliably via text, image, or clips.

Q2. Is Runway the best AI video generator?

A2. Runway is widely recognized as one of the most powerful and versatile AI video generators, with advanced tools, high fidelity output, and creative flexibility that many creators value. However, it is not universally ranked as the single best; other models like Sora, Veo, and Kling also compete at top levels depending on needs. Reviews often place Runway among the top tools across every category.

Q3. How long of a video can Runway AI make?

A3. With Runway's native models, including Gen 4, Gen 4 Turbo, and Gen 4.5, you can generate videos between 2 and 10 seconds per clip. If you need slightly longer outputs, you can use other integrated models like Kling, Veo 3, Sora, or WAN inside Runway.

Conclusion on Runway ML AI Video Generator

In this Runway ML AI video generator guide, you explored how the platform handles video creation from text, images, and reference clips. You saw how Gen 4, Gen 4 Turbo, and Gen 4.5 work, along with video-to-video generation through Gen-4 Aleph. You also learned about the built-in tools that support character swaps, motion control, upscaling, and workflow automation inside the Apps section. Finally, you understood the pricing structure and what the free and Pro plans offer. With all that in mind, you now have a clear picture of what Runway can deliver.

Home > Learn > What Runway ML AI Video Generator Offers? A Detailed Guide with Features

Select the product rating:

Natalie Carter

Editor-in-Chief

My goal is to make technology feel less intimidating and more empowering. I believe digital creativity should be accessible to everyone, and I'm passionate about turning complex tools into clear, actionable guidance.

View all ArticlesLeave a Comment

Create your review for HitPaw articles